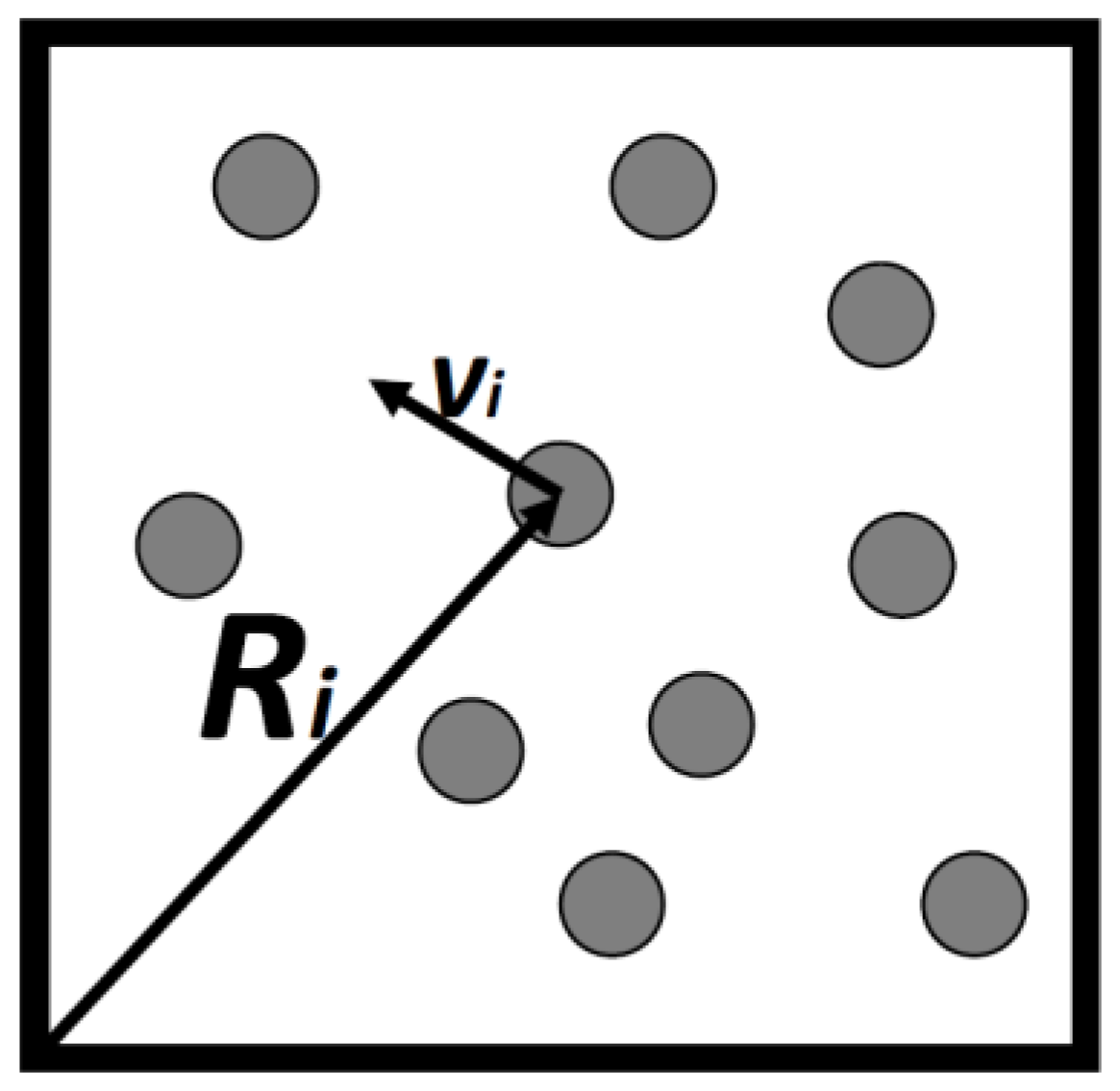

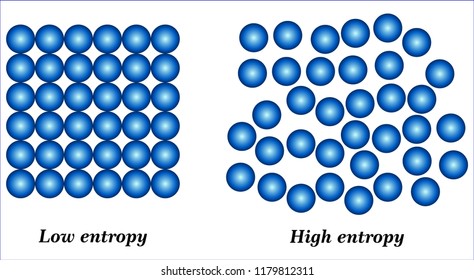

Once we have that it's just to follow the formula on the wikipedia page. 16 Answers Sorted by: 66 Entropy can mean different things: Computing In computing, entropy is the randomness collected by an operating system or application for use in cryptography or other uses that require random data. The thing with Relative Entropy or Kullback-Leibler Divergence is that it requires two distributions, we got to have some second distribution to compare against. Polyphonic music files were analyzed using the set of symbols that produced the Minimal Entropy Description, which we call the Fundamental Scale. Having an entropy of 1/2 means that if "4" occurs on average 1 in 2 symbols we should need on average 1/2 bit per symbol to tell if it was a 4. ShannonHartley theorem v t e In information theory, the entropy of a random variable is the average level of 'information', 'surprise', or 'uncertainty' inherent to the variable's possible outcomes. Entropy Symbol Alchemy Symbols, Magic Symbols, Hr Giger Art, Symbol. If you have one probability distribution you wish to test against your string then you could calculate a relative entropy.Įntropy can be defined as $$E(k) = \sum_$ which means that on average we would need 1/2 bits per symbol to consider if it was a "4" or not. Entropy, then, is used to describe energy dispersal and the idea of irreversibility.

Relative entropy is a measure how close or distant one probability distribution is to another.

Entropy is a measure of how much information there is in one source with one probability distribution.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed